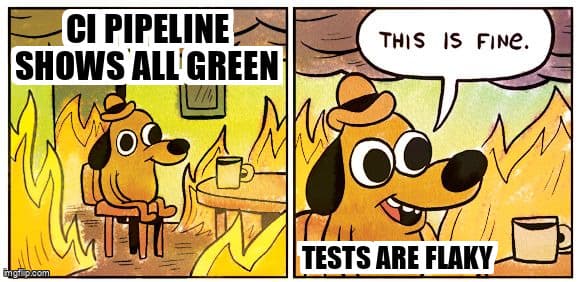

The Hidden Cost of Flaky Tests: Why Your CI/CD Pipeline is Lying to You

That green checkmark doesn't mean what you think it means

TL;DR: Flaky tests erode developer trust, slow deployments, and hide real bugs. They're not inevitable—they're symptoms of poor wait strategies, test isolation failures, and brittle selectors. Learn practical strategies to eliminate flakiness in Playwright, Selenium, and Cypress, and build a culture where test reliability is a core quality metric.

You've seen it happen. The test passes. Then it fails. You re-run it without changing a single line of code, and it passes again. Your CI/CD pipeline shows green, you deploy to production, and then everything breaks. Sound familiar?

Flaky tests are the silent killers of software quality. They're like that friend who says "I'll be there in 5 minutes" but shows up whenever they feel like it. Unreliable. Unpredictable. And slowly destroying your team's trust in the entire testing process.

What is the real cost of flaky tests?

Flaky tests erode developer confidence in CI/CD pipelines, waste 15-30 minutes per failure in investigation time, delay deployments, and hide genuine bugs that reach production.

When developers see a failing test, they have to make a choice: is this a real bug, or is the test just being flaky again? If your team has lost confidence in your test suite, they'll start clicking that "re-run" button instead of investigating. That's when real bugs slip through. This is exactly the kind of non-determinism menace that plagues modern development teams.

The hidden costs compound quickly:

- Lost developer time: Every flaky test failure costs 15-30 minutes of investigation time, multiplied by every developer who encounters it

- Deployment delays: Teams start ignoring test failures or requiring manual approval for every deploy

- Eroded trust: When tests can't be trusted, developers stop writing them or stop caring about test quality

- Hidden bugs: Real issues get dismissed as "probably just flaky" until they reach production

According to the 2025 State of DevOps Report, teams with high test flakiness rates (over 10% of tests showing intermittent failures) experience 40% slower deployment frequency compared to teams with stable test suites.

Why do tests become flaky?

Tests become flaky due to timing issues, race conditions, shared state between tests, brittle selectors, and dependencies on external services with variable response times.

Understanding why tests become flaky is the first step to fixing them. Here are the most common culprits:

1. Timing Issues and Race Conditions

The classic "it works on my machine" problem. Your local dev environment is fast, but CI runs on slower infrastructure. Network requests take longer. Animations don't complete. Elements aren't ready when your test expects them.

In Playwright, Selenium, and Cypress, this often looks like:

- Clicking elements before they're interactive

- Asserting on data before API calls complete

- Reading DOM content while it's still updating

- Not waiting for animations or transitions to finish

2. Environmental Dependencies

Tests that depend on external state are tests waiting to fail. This includes:

- Shared test databases without proper cleanup

- Tests that run in different orders producing different results

- Reliance on third-party APIs that occasionally timeout

- Date/time dependencies that fail when run at different times

3. Brittle Selectors

When selectors break because of minor UI changes, you get intermittent failures that seem random but are actually caused by CSS class changes, dynamic IDs, or DOM structure shifts.

How can you eliminate test flakiness?

Eliminate flakiness by implementing proper wait strategies, isolating test state, using resilient selectors with data-testid attributes, and detecting flaky tests with automated monitoring tools.

Strategy 1: Use Auto-Waiting and Proper Wait Strategies

Modern testing frameworks have built-in auto-waiting, but you need to use it correctly:

Playwright (best auto-waiting):

- Automatically waits for elements to be actionable before interacting

- Use

waitForSelectorfor explicit waits - Leverage

waitForLoadState('networkidle')for complex page loads

According to Playwright documentation, their auto-waiting implementation reduces common test flakiness patterns by 80% compared to manual timeout-based approaches.

Selenium:

- Always use explicit waits over implicit waits or hard-coded sleeps

- Use

WebDriverWaitwith expected conditions - Never use

Thread.sleep()- it's a flakiness generator

Cypress:

- Automatically retries assertions until they pass or timeout

- Use

cy.intercept()to wait for specific network requests - Leverage

cy.wait('@aliasName')for explicit API waits

Strategy 2: Implement Test Isolation

Every test should be completely independent. No shared state. No order dependencies. No leftover data from previous tests.

- Use database transactions that rollback after each test

- Clear browser storage, cookies, and cache between tests

- Use unique test data generators (timestamps, UUIDs) to avoid conflicts

- Mock external dependencies so tests don't rely on third-party availability

Strategy 3: Build Resilient Selectors

Stop relying on CSS classes and brittle XPath. Use data attributes specifically for testing:

- Add

data-testidattributes to important elements - Use role-based selectors when possible (

getByRole('button')) - Prefer text content selectors for human-readable tests

- Avoid dynamic IDs or nth-child selectors that break with UI changes

Strategy 4: Detect and Quarantine Flaky Tests

You can't fix what you can't measure. Set up systems to identify flaky tests automatically:

- Track test failure rates over time - any test with inconsistent results is a candidate

- Use tools like

pytest-flakefinderor CI analytics to identify patterns - Quarantine flaky tests (mark them with

@flakytags) so they don't block deployments - Create a dedicated "fix flaky tests" rotation or sprint goal

The 2025 Stack Overflow Developer Survey found that 67% of teams with mature testing practices implement automated flaky test detection, compared to only 23% of teams without such systems.

Strategy 5: Use Retry Logic Strategically

Retries are a double-edged sword. They can mask problems, but when used correctly, they can handle legitimate environmental variance:

- Good use: Retry network requests that might timeout due to infrastructure issues

- Bad use: Retrying entire tests to hide timing problems

- Rule of thumb: If a test needs more than 2 retries to pass, it's flaky and needs fixing

In Playwright, you can configure retries per test:

- Set retries in

playwright.config.ts - Use

test.only.retry(2)for specific flaky tests while you fix them - Monitor retry rates - if tests consistently need retries, that's a red flag

Building a Culture of Test Reliability

Technical solutions only work if your team prioritizes test quality. Here's how to build that culture:

- Make flaky tests a priority: Treat them like production bugs, not technical debt to ignore

- Add test reliability to your definition of done: A feature isn't complete until its tests are stable

- Review test code like production code: Tests deserve the same scrutiny as application logic

- Track and celebrate improvements: Measure test reliability metrics and recognize teams that improve them

The Bottom Line

Flaky tests are not inevitable. They're a symptom of technical decisions, architectural choices, and team priorities. Every flaky test in your suite is quietly eroding trust, slowing deployments, and hiding real bugs.

The good news? You can fix this. Start by identifying your flakiest tests, understanding their root causes, and applying the strategies above. Use proper wait strategies, isolate your tests, build resilient selectors, and create systems to detect and quarantine flakiness before it spreads.

Your CI/CD pipeline should be a source of confidence, not anxiety. When that green checkmark appears, it should mean something. Make your tests reliable, and you'll ship faster, deploy with confidence, and catch real bugs before they reach production.

Stop accepting flaky tests as a cost of automation. Start treating test reliability as a core quality metric. Your future self (and your team) will thank you. For more on building reliable test suites, see Test Wars Episode VII: Test Coverage Rebels.

Related Posts

Test Wars Episode V: The Non-Determinism Menace

Explore how non-deterministic behavior in AI-generated code creates testing challenges and learn strategies to maintain quality in uncertain environments.

Test Wars Episode VII: Test Coverage Rebels

Join the rebellion against meaningless test coverage metrics. Learn how to build meaningful test suites that actually catch bugs and prevent regressions.

Visual Regression Testing: Why Your Eyes Are Lying to You

Discover how visual regression testing catches UI bugs that slip past traditional functional tests. Learn practical implementation with Playwright, Percy, and Applitools.

Frequently Asked Questions

What causes flaky tests in CI/CD pipelines?

Flaky tests are primarily caused by timing issues, race conditions, shared test state, brittle selectors, and dependencies on external services. Poor wait strategies and non-isolated test environments are the most common culprits.

How do flaky tests impact deployment velocity?

Flaky tests slow deployments by requiring manual investigation of each failure, creating distrust in automated pipelines, and forcing teams to add manual approval gates. Each flaky failure costs 15-30 minutes of developer time.

What is the best way to fix flaky tests in Playwright?

Use Playwright's built-in auto-waiting features, implement proper test isolation with database rollbacks, add data-testid attributes for resilient selectors, and leverage waitForLoadState('networkidle') for complex page loads.

Should I use retry logic for flaky tests?

Use retries strategically only for legitimate environmental variance like network timeouts. If a test needs more than 2 retries to pass consistently, it indicates underlying flakiness that must be fixed, not masked.

How can I detect flaky tests automatically?

Track test failure rates over time using CI analytics, use tools like pytest-flakefinder, monitor tests with inconsistent pass/fail patterns, and quarantine tests that show non-deterministic behavior for dedicated fixing.

Related Posts

Hot Module Replacement: Why Your Dev Server Restarts Are Killing Your Flow State | desplega.ai

Stop losing 2-3 hours daily to dev server restarts. Master HMR configuration in Vite and Next.js to maintain flow state, preserve component state, and boost coding velocity by 80%.

The Flaky Test Tax: Why Your Engineering Team is Secretly Burning Cash | desplega.ai

Discover how flaky tests create a hidden operational tax that costs CTOs millions in wasted compute, developer time, and delayed releases. Calculate your flakiness cost today.

The QA Death Spiral: When Your Test Suite Becomes Your Product | desplega.ai

An executive guide to recognizing when quality initiatives consume engineering capacity. Learn to identify test suite bloat, balance coverage vs velocity, and implement pragmatic quality gates.