The $2M Testing Tax: Why Your 'Comprehensive' QA Strategy is Burning Cash

A no-holds-barred analysis of how enterprises waste millions on QA theater - running thousands of tests that catch zero production bugs while real issues slip through.

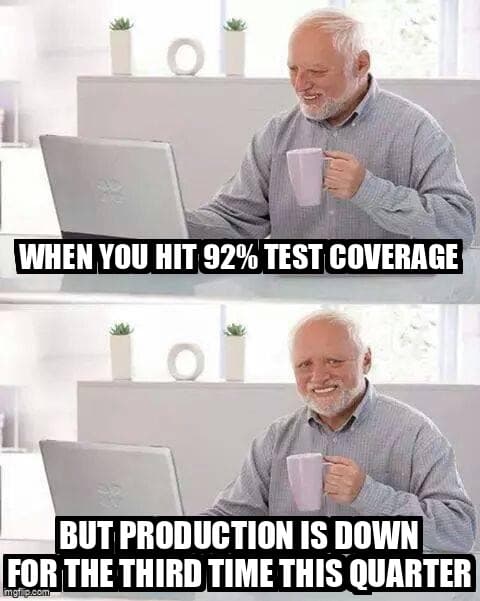

Let me tell you about a conversation I had last week with a CTO who proudly announced his team achieved 92% test coverage. Then, without a hint of irony, complained about the three critical production bugs that took down his platform the same quarter.

His QA budget? $2.1 million annually. His actual prevented customer-impacting defects? By his own admission, "impossible to measure."

Welcome to QA theater, where the metrics are made up and the points don't matter.

What is the cost-per-prevented-defect calculation?

Cost-per-prevented-defect is your annual QA investment divided by the number of customer-impacting bugs your testing actually prevented from reaching production.

Here's the question that will make your VP of Engineering squirm: What's your cost-per-prevented-defect?

Not cost-per-test. Not cost-per-coverage-percentage-point. The actual ROI calculation:

Annual QA Investment

÷ Customer-Impacting Bugs Actually Prevented

= Cost Per Prevented Defect

Most teams can't answer this. They can tell you how many tests they run (14,387!), how long the CI pipeline takes (47 minutes!), and what percentage of code is covered (89.3%!). But ask them how many production incidents were prevented by that test suite last month, and you get stammering.

According to the 2025 State of Software Quality Report, 68% of engineering teams cannot measure the actual bug prevention ROI of their QA investment, relying instead on vanity metrics like test count and coverage percentages.

That's not a testing strategy. That's a very expensive security blanket.

Why does 90% coverage correlate with 10% risk mitigation?

High test coverage measures lines executed during testing but fails to validate edge cases, integration points, or actual user workflows where production bugs actually occur.

The dirty secret of test coverage metrics: they measure the wrong thing entirely.

Coverage tells you what lines of code were executed during testing. It doesn't tell you:

- If the right assertions exist - I've seen 100% covered code with zero meaningful assertions

- If edge cases are tested - Happy path coverage is not risk mitigation

- If integration points are validated - Unit testing the Titanic's individual compartments

- If actual user workflows are verified - Nobody uses your app by calling isolated functions

The real kicker? The bugs that actually hurt your business live in the 10% you're not testing. They lurk in:

- Race conditions between microservices

- Third-party API contract changes

- Browser-specific rendering issues

- Data migration edge cases

- Authentication state management

Meanwhile, you're maintaining 8,000 unit tests that verify your string concatenation works correctly. Congratulations.

What are the hidden costs of brittle test suites?

Brittle test suites consume 95% of QA budgets on maintenance, false positives, and CI infrastructure, leaving only 5% for actual bug prevention work that protects customers.

Let's talk about what that $2M is actually buying you.

In most organizations, the breakdown looks like this:

- 35% - Test maintenance: Updating tests after every refactor, because your tests are coupled to implementation details

- 25% - CI infrastructure: Running those 14,387 tests on every commit requires serious hardware

- 20% - False positive investigation: "Tests are red again, but it works on my machine"

- 15% - Actual test development: Writing new tests (mostly for coverage metrics)

- 5% - Meaningful quality work: Actually preventing bugs customers would experience

That last line? That's your 5% signal in a 95% noise ratio. You're paying $1.9M for static that makes it harder to hear the real problems.

The Flake Tax

Here's a fun exercise: Calculate how many engineer-hours your team spent last month investigating test failures that weren't real bugs.

I'll wait.

One team I consulted with tracked this religiously. Their flaky tests created 127 hours of investigation time in a single month. At a blended rate of $150/hour, that's $19,050 in a single month spent chasing ghosts. $228,600 annually.

According to Google's Testing Blog, teams with high test flakiness rates spend an average of 16-24% of their QA budget investigating false positives, with some organizations reporting flake rates as high as 45% in their E2E test suites.

Their solution? They bumped up the retry count in CI and called it "handled."

How do I shift from testing theater to ROI-driven quality?

Focus testing on actual user workflows with fast E2E tests, measure prevented defects instead of coverage, delete tests that have not caught bugs, and invest in production monitoring for faster detection.

So what does an actually effective QA strategy look like? One that prevents real problems instead of generating impressive dashboards?

1. Invert Your Testing Pyramid (Maybe)

The classic testing pyramid (lots of unit tests, fewer integration tests, minimal E2E) made sense when E2E tests were slow and brittle. Modern tooling has changed the game.

Consider this heresy: What if you invested heavily in fast, reliable E2E tests that actually validate user workflows, and wrote unit tests only for genuinely complex business logic?

One team made this shift and reduced their test suite from 12,000 tests to 800. Their CI time dropped from 45 minutes to 8 minutes. Their production bug rate dropped by 60%.

Turns out, testing how users actually use your software is more valuable than testing individual functions in isolation. Who knew?

2. Measure What Actually Matters

Stop celebrating test count and coverage. Start tracking:

- Production incidents caught in pre-prod: Did your tests actually prevent a real problem?

- Time from defect to detection: How fast do you catch issues?

- False positive rate: What percentage of test failures aren't real bugs?

- Test maintenance burden: How often do passing tests need updates?

- Cost per prevented defect: The ROI calculation that matters

If your monitoring dashboard doesn't show these metrics, you're measuring vanity, not value.

3. Kill Your Darlings (Tests)

Every test has a maintenance cost. If a test hasn't caught a bug in production in the last year, what value is it providing?

I recommend this exercise: Tag every test with the last time it prevented a production bug. After six months, delete everything untagged.

The screaming you hear will be loud. The reduction in waste will be significant.

4. Shift Investment to Production Monitoring

Here's an uncomfortable truth: Some bugs will reach production. The question is how fast you detect and resolve them.

Instead of spending $1.5M trying to achieve 95% coverage (and still missing critical bugs), what if you spent:

- $800K on smart, focused testing of actual user workflows and business-critical paths

- $400K on production observability that detects issues in minutes, not hours

- $300K on automated rollback and feature flags to contain impact

You'd catch issues faster, contain them better, and probably reduce your overall defect impact by 70%.

The Framework: Calculate Your True Testing ROI

Ready to do the uncomfortable math? Here's your homework:

Step 1: Calculate Total QA Investment

- QA engineer salaries + benefits

- CI/CD infrastructure costs

- Test tooling and licenses

- Developer time spent on test maintenance (track it for one sprint, extrapolate)

- Time spent investigating false positives

Step 2: Count Actual Prevented Defects

Go through your bug tracking system. For each production bug in the last quarter, ask: "Could our current test suite have caught this?"

Then count the bugs that were caught in staging/QA that match those patterns. That's your prevented defect count.

Be honest. "Could have" means the test exists and would have failed. Not "we could write a test for this."

Step 3: Do the Division

Total QA Investment ÷ Prevented Defects = Cost Per Prevented Defect

If that number is over $10,000, you have a serious efficiency problem. If it's over $50,000, you're in QA theater territory.

Step 4: Optimize Ruthlessly

Now you have a baseline. Every QA decision can be evaluated against this metric:

- "Should we add this test?" → Will it reduce cost-per-prevented-defect?

- "Should we fix this flaky test?" → What's the investigation cost vs. delete cost?

- "Should we increase coverage?" → What's the marginal ROI?

The Uncomfortable Conversation

Here's what I want you to do Monday morning: Walk into your QA lead's office and ask them to calculate your cost-per-prevented-defect.

If they can't answer, you're probably wasting money. If they can answer and the number is terrifying, at least you know where you stand.

The goal isn't zero testing. The goal isn't even minimal testing. The goal is optimized testing - the right tests, catching the right bugs, at the right cost.

Everything else is theater. And at $2M per year, that's one expensive show.

Stop Burning Cash on QA Theater

Desplega.ai helps teams ship quality software without the waste. Our intelligent testing platform focuses on actual risk mitigation, not vanity metrics.

- Automated E2E testing that actually catches production bugs

- ROI tracking built into every test execution

- Production monitoring that detects issues in seconds

- Smart test selection that runs what matters

Ready to calculate your real testing ROI? Schedule a strategy call and we'll walk through the framework together.

Frequently Asked Questions

What is cost-per-prevented-defect in QA?

Cost-per-prevented-defect is your total QA investment divided by the number of customer-impacting bugs actually prevented, measuring the true ROI of your testing strategy.

Why does high test coverage not prevent production bugs?

Test coverage measures lines executed during testing, not whether meaningful assertions exist or if edge cases, integration points, and actual user workflows are validated.

How much do flaky tests cost organizations?

Organizations can spend over $200,000 annually investigating false positives from flaky tests, with teams averaging 127 hours per month on ghost-chasing.

What should I measure instead of test coverage?

Track production incidents caught in pre-prod, time from defect to detection, false positive rate, test maintenance burden, and cost per prevented defect.

Should I delete tests that have not caught bugs in a year?

Yes - every test has a maintenance cost. If a test has not prevented a production bug in 12 months, it is likely providing no value and should be removed.

Related Posts

Hot Module Replacement: Why Your Dev Server Restarts Are Killing Your Flow State | desplega.ai

Stop losing 2-3 hours daily to dev server restarts. Master HMR configuration in Vite and Next.js to maintain flow state, preserve component state, and boost coding velocity by 80%.

The Flaky Test Tax: Why Your Engineering Team is Secretly Burning Cash | desplega.ai

Discover how flaky tests create a hidden operational tax that costs CTOs millions in wasted compute, developer time, and delayed releases. Calculate your flakiness cost today.

The QA Death Spiral: When Your Test Suite Becomes Your Product | desplega.ai

An executive guide to recognizing when quality initiatives consume engineering capacity. Learn to identify test suite bloat, balance coverage vs velocity, and implement pragmatic quality gates.