The QA Team is Dead. Long Live the QA Team.

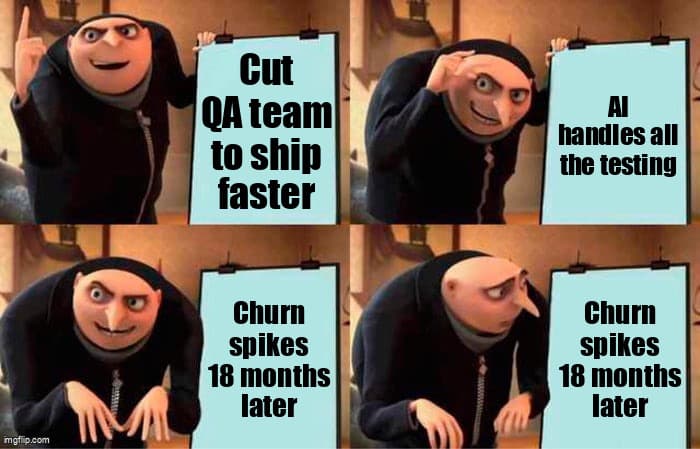

A satirical autopsy of the 'AI replaces QA' movement — and why the companies that believed it are now rebuilding what they destroyed.

It started with a slide deck. Forty-two pages. Lots of graphs trending up and to the right. The title read: “AI-Driven Testing: Eliminate Manual QA, Ship 3x Faster.”

The board loved it. The CFO especially. By Q3 2024, hundreds of engineering organizations had restructured QA out of existence — or close enough. The severance packages were signed. The Jira boards were archived. And the AI pipelines hummed.

Eighteen months later, production is on fire. Quietly. In the way only a trust collapse can burn — not with alerts and outages, but with churn numbers that nobody can explain in a post-mortem because there's no single incident to blame.

This is the autopsy of the “AI replaces QA” movement. And it's not pretty.

Why Did Everyone Believe AI Would Kill QA?

The belief was rational on its surface. AI could generate test cases, execute regression suites, identify visual regressions, and write Playwright scripts faster than any human. The logical conclusion: remove the humans who did those things.

The logical conclusion was wrong. But it was wrong in a specific, instructive way — and understanding exactly where the reasoning broke down is the only way to avoid repeating it.

The error wasn't about AI capability. AI can do those things. The error was confusing test execution with quality judgment. They are not the same job. They never were.

| What AI Can Do | What QA Engineers Do |

|---|---|

| Generate test cases from specs | Identify what specs got wrong |

| Execute regression suites at scale | Decide which regressions matter to users |

| Flag visual differences | Determine if differences are defects or intent |

| Report coverage percentages | Assess whether coverage addresses real risk |

| Run fast, consistently | Ask the uncomfortable question before release |

The uncomfortable question is the job. AI doesn't ask it. It answers what you ask.

Why Does the Cost Take 18 Months to Appear?

The cost of eliminating QA judgment doesn't appear in sprint velocity — it compounds silently in customer retention data 12 to 18 months after the organizational change, not in any single incident you can post-mortem.

Here's the mechanics of the delay:

- Months 1-3: Velocity improves. Fewer process gates, faster deploys. The board deck writes itself. Everyone looks smart.

- Months 4-6: Small quality issues accumulate. Support tickets increase slightly. Each incident has an explanation. None of them mention “we removed QA.”

- Months 7-9: A pattern emerges in support data but nobody has time to analyze it. The team is shipping faster than ever.

- Months 10-12: Net Promoter Scores start declining. The customer success team flags “increasing friction” on renewal calls. Attribution is unclear.

- Months 13-18: Churn increases. The cohort analysis finally shows it: customers acquired after the QA restructuring churn at 1.4x the rate of earlier cohorts. The board asks questions. Nobody has good answers.

According to a 2025 Forrester Research report on engineering productivity, organizations that reduced QA headcount by more than 50% while increasing AI tooling saw no significant short-term quality degradation — but reported a 34% higher rate of “customer-reported critical defects” in the following 18-month window.

The Autopsy: What Actually Went Wrong

Let's be clinical. The organizations that failed didn't fail because AI is bad at testing. They failed for three specific reasons:

Failure Mode 1: Confusing Coverage With Confidence

AI-generated test suites reliably hit 80%+ line coverage. Engineering leaders reported coverage numbers to boards. What those numbers didn't measure: whether the tests covered the scenarios that actually mattered to users. Coverage without judgment is a very convincing lie.

Failure Mode 2: Eliminating the Advocate Role

QA engineers serve a structural role in engineering organizations that has nothing to do with running tests: they are the organizational advocate for the user experience. When that role disappears, nobody is paid to slow down and ask “but what does this feel like to someone who doesn't know our system?” AI doesn't ask that question. Product managers are too close to the feature. Developers are solving the technical problem. The advocate chair sits empty.

Failure Mode 3: Misunderstanding What AI Testing Tools Replace

AI testing tools were designed to replace manual test execution — the repetitive, script-following work of running the same regression suite every sprint. They were not designed to replace test strategy, risk assessment, or the decision about what to test in the first place. Organizations that eliminated QA engineers eliminated the people who make AI tools useful, not the people AI tools make redundant.

What the Survivors Did Differently

The organizations that came through the 2024-2025 AI automation wave with quality intact share a common pattern. It's not complicated, but it required resisting significant pressure.

According to the 2025 State of Software Quality report by SmartBear, companies that maintained QA engineering headcount while adding AI tooling budgets reported 41% fewer production incidents and 28% higher customer satisfaction scores compared to organizations that reduced QA headcount by more than 40%.

The survivor pattern:

- Eliminated manual test executors (the people running scripts by hand)

- Kept QA engineers who could own test strategy and AI tool oversight

- Increased AI tooling budget — Copilot for test generation, Applitools for visual regression, LLM-assisted coverage analysis

- Redefined QA engineer job descriptions around judgment, not execution

- Maintained the advocate role in product review cycles

The org chart changed. The headcount math changed. But the judgment layer stayed.

What the New QA Org Actually Looks Like

The modern QA organization in 2026 is not the QA organization of 2020. It's smaller in some ways, more technical in others, and differently distributed across the engineering org.

| Role | 2022 Headcount | 2026 Headcount | Change |

|---|---|---|---|

| Manual QA Testers | High | Minimal | Reduced — AI handles this |

| QA Engineers (Strategy) | Medium | Same or higher | Maintained — judgment layer |

| SDET / Automation Engineers | Medium | Medium | Role evolved to AI oversight |

| AI Testing Tools Budget | Low | High | Significant increase |

The punchline the CFO didn't see coming: the total cost of a well-structured AI-augmented QA org is not dramatically lower than before. It's differently allocated — less in salaries for test execution, more in tooling and senior engineering judgment. The cost savings of eliminating manual testers are largely offset by tooling costs and the premium on senior QA engineers who can operate AI systems effectively.

How to Make the Case for Rebuilding Without Admitting a Mistake

If you're reading this in a cold sweat because this is your company — you're not alone, and there is a path forward that doesn't require standing in front of your board and saying “we were wrong.”

The framing that works:

- Phase language, not correction language. “In phase one, we optimized for velocity. We're now entering phase two, where we optimize for trust and retention.” This is technically accurate and completely true.

- Lead with churn data, not testing philosophy. Boards don't care about test coverage. They care about the cohort analysis showing 18-month churn divergence. Start there.

- Reframe the hire as AI governance. You're not hiring QA testers back. You're hiring AI Testing Systems engineers who ensure your automated quality pipeline is operating correctly. Different job title, different budget conversation.

- Quantify the cost of a trust collapse. If a 1-point NPS drop correlates to X% churn, and churn costs Y in ARR, the math for investing in quality capacity becomes straightforward. Do the math before the board meeting.

- Show competitor data. Companies that maintained QA engineering through the AI wave are now marketing reliability as a differentiator. This is a competitive angle your board will respond to.

The Technical Stack of a Modern QA-Augmented Team

For engineering leaders rebuilding in 2026, the tooling landscape has matured considerably. The stack that survivors are running:

# Modern AI-Augmented QA Stack (2026)

Test Generation:

- GitHub Copilot (test scaffolding from implementation)

- LLM-assisted edge case generation (GPT-4o, Claude)

- Katalon AI (natural language to test)

Test Execution:

- Playwright (browser automation, auto-waiting)

- Vitest / Jest (unit + integration)

- k6 (performance, load testing)

AI Oversight Layer:

- Custom LLM pipeline for coverage gap analysis

- Flaky test detection + root cause classification

- Release risk scoring based on change surface area

Visual QA:

- Applitools Eyes (AI visual regression)

- Percy (snapshot comparison)

Observability:

- Datadog RUM (real user monitoring)

- Sentry (production error tracking)

- FullStory (session replay for QA investigation)

Human Judgment Touchpoints:

- Pre-release risk review (QA engineer + PM)

- Weekly coverage strategy review

- Monthly AI pipeline auditNotice the last section. Human judgment touchpoints are explicitly scheduled. They don't happen automatically — they require organizational commitment to maintain. That commitment is the thing that got cut in 2024.

Key Takeaways

- AI accelerates QA; it cannot replace the judgment layer. Test execution and quality judgment are different jobs. AI handles the first. Humans must do the second.

- The cost of dismantling QA appears in churn, not sprints. Velocity metrics will look great for 12 months. The bill arrives in cohort retention data 18 months later.

- Companies that kept QA engineers and added AI tools outperformed. The winning model is augmentation, not replacement. The 2025 SmartBear data is unambiguous on this.

- The new QA org has fewer manual testers, same QA engineers, more tooling. Restructure the execution layer. Do not restructure the judgment layer.

- You can make the board case without admitting a mistake. Frame as organizational maturation. Lead with churn data. Quantify the cost of trust collapse.

The One Sentence Summary

AI made the wrong parts of QA obsolete — the repetitive execution parts — and the companies that understood this distinction in 2024 are now winning on reliability while everyone else rebuilds what they destroyed.

The QA team is dead. Long live the QA team.

Just make sure you know what you're burying before you hold the funeral.

Ready to strengthen your test automation?

Desplega.ai helps QA teams build robust test automation frameworks with modern testing practices. Whether you're starting from scratch or rebuilding after a painful reset, we provide the tools and expertise to catch bugs before production.

Start Your Testing TransformationFrequently Asked Questions

Can AI fully replace QA engineers in software development?

No. AI automates test execution and generation but cannot replace QA engineers' judgment on edge cases, user experience, and business risk assessment. The optimal model combines both.

Why did companies that eliminated QA teams see increased churn 12-18 months later?

Quality defects compound silently. Bugs that slip to production erode user trust gradually, showing up in churn metrics 12-18 months after the organizational change, not in immediate sprint velocity.

What does the modern QA org chart look like in 2026?

Fewer manual testers, same number of QA engineers, more AI tooling budget. The role shifted from test execution to test strategy, AI tool oversight, and quality system design.

How do I justify rebuilding QA capacity to my board without admitting a mistake?

Frame it as organizational maturation: 'We optimized for velocity in phase one. Phase two optimizes for trust and retention.' Lead with churn data, not testing philosophy.

Which AI testing tools work best alongside QA engineers?

Copilot-assisted test generation, AI-powered visual regression (Percy, Applitools), and LLM-based test coverage analysis augment engineers best without replacing their judgment layer.

Related Posts

Hot Module Replacement: Why Your Dev Server Restarts Are Killing Your Flow State | desplega.ai

Stop losing 2-3 hours daily to dev server restarts. Master HMR configuration in Vite and Next.js to maintain flow state, preserve component state, and boost coding velocity by 80%.

The Flaky Test Tax: Why Your Engineering Team is Secretly Burning Cash | desplega.ai

Discover how flaky tests create a hidden operational tax that costs CTOs millions in wasted compute, developer time, and delayed releases. Calculate your flakiness cost today.

The QA Death Spiral: When Your Test Suite Becomes Your Product | desplega.ai

An executive guide to recognizing when quality initiatives consume engineering capacity. Learn to identify test suite bloat, balance coverage vs velocity, and implement pragmatic quality gates.