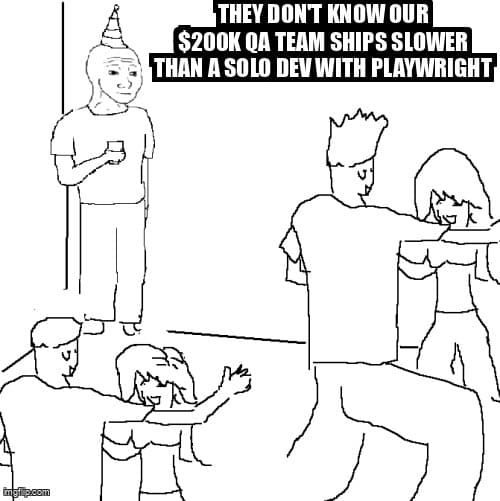

The $200K Testing Team That Ships Slower Than a Solo Dev: When Process Becomes Performance Theater

How enterprise QA organizations justify massive budgets while delivering less value than a single developer with basic automation

Let me tell you about a company I consulted for last year. They had a QA team of six people. Budget: roughly $200,000 annually when you factor in salaries, tooling licenses, and overhead. Their release cycle? Six weeks. Meanwhile, their competitor—a startup with one developer writing tests alongside features—was shipping twice a week with fewer production incidents.

The kicker? The enterprise team proudly reported "87% test coverage" in their quarterly metrics. The solo dev? Never measured it. They just knew their shit worked because they tested what mattered and shipped when it was ready.

This isn't an isolated case. It's a pattern I've seen repeatedly across mid-sized companies that have "matured" their QA function to the point of organizational paralysis. The symptoms are always the same: impressive org charts, sophisticated tooling stacks, meticulous documentation processes—and glacial delivery velocity.

The Performance Theater Problem

Here's what happens when a QA team optimizes for looking productive rather than being productive:

- Meeting Theater - Daily standups, grooming sessions, retrospectives, planning meetings. The QA team spends 40% of their time coordinating testing rather than actually testing.

- Documentation Theater - Test cases written in enterprise test management tools that nobody reads but everyone maintains. Hundreds of hours updating test plans that could be expressed in 50 lines of code.

- Metrics Theater - Dashboards showing test coverage percentages, defect density ratios, and test execution rates. None of them answer: "Can users successfully complete critical workflows?"

- Process Theater - Every code change requires a QA ticket, formal test plan, and sign-off process. Three days to get approval to test a button color change.

The solo developer? They write a Playwright test that covers the user flow, run it locally, see it pass, and ship. Total time: 20 minutes.

Real Example: The Button That Took Three Weeks

A developer needed to add a "Cancel Subscription" button to a settings page. Implementation: 30 minutes. The QA process that followed:

- Week 1: QA ticket created, prioritized in grooming, test plan drafted

- Week 2: Test cases written in Zephyr, reviewed by QA lead, environments provisioned

- Week 3: Testing executed, one minor defect found (tooltip typo), fixed, retested, signed off

A solo dev would have written an E2E test checking the button exists, clicks it, verifies the modal appears, and confirms cancellation. Done in 20 minutes. Shipped immediately.

The Job Security Optimization

Let's be honest about the incentive structure here. Enterprise QA teams are often optimizing for the wrong thing: organizational indispensability rather than product quality.

Think about it from the QA manager's perspective. If you automate everything efficiently, make testing fast, and enable developers to handle most quality validation themselves... what happens to your team's headcount? What happens to your budget next quarter?

The rational response—even if nobody admits it—is to create processes that justify your team's existence:

- Make testing a bottleneck - If everything must pass through QA, QA becomes critical infrastructure. Slow releases? That's just thoroughness.

- Complicate the tooling - Enterprise test management systems that require specialized knowledge to operate. Only QA can navigate the complexity.

- Expand the scope - Insist on testing things that don't need testing. Every CSS change needs regression testing. Every API parameter needs boundary value analysis.

- Defend the metrics - When someone questions the value, point to the dashboards. Look at all these tests we run! Look at this coverage percentage! (Ignore that production bugs aren't decreasing.)

Meanwhile, the solo developer optimizes for shipping working software. Their incentive is clear: if it breaks in production, they own it. There's no QA team to blame. This creates a brutally honest relationship with quality.

The Coverage Percentage Myth

I need to address the elephant in every QA dashboard: test coverage percentage. This metric has caused more waste than any other in software engineering.

Here's what 87% test coverage actually means: "We executed 87% of the code paths during testing." Here's what it doesn't mean: "The software works correctly" or "Users can successfully complete their tasks" or "We won't have production incidents."

The Coverage Theater Example

Company A has 87% test coverage and ships every 6 weeks. Company B has "unknown coverage" (never measured) and ships daily. Company B has 40% fewer production incidents.

Why? Company B tests behavior, not code paths. They have 20 E2E tests covering critical user journeys. Company A has 2,000 unit tests verifying implementation details that change frequently.

When Company A refactors code, they spend days updating tests that break even though functionality didn't change. Company B's tests keep passing because user behavior didn't change.

The solo developer doesn't optimize for coverage percentage. They write tests that fail when users would be unhappy. That's it. That's the whole strategy. And it works.

// Enterprise QA Team Test (optimizing for coverage)

describe('UserService', () => {

it('should set firstName property', () => {

const user = new User();

user.setFirstName('John');

expect(user.firstName).toBe('John');

});

it('should set lastName property', () => {

const user = new User();

user.setLastName('Doe');

expect(user.lastName).toBe('Doe');

});

it('should set email property', () => {

const user = new User();

user.setEmail('john@example.com');

expect(user.email).toBe('john@example.com');

});

// 47 more tests like this...

});

// Solo Developer Test (optimizing for user value)

test('user can create account and log in', async ({ page }) => {

await page.goto('/signup');

await page.fill('[name="firstName"]', 'John');

await page.fill('[name="lastName"]', 'Doe');

await page.fill('[name="email"]', 'john@example.com');

await page.fill('[name="password"]', 'SecurePass123');

await page.click('button:has-text("Create Account")');

await expect(page).toHaveURL('/dashboard');

await expect(page.locator('h1')).toContainText('Welcome, John');

});The enterprise team spent hours writing 50 unit tests that verified every setter method. The solo dev wrote one test that verified the feature actually works from the user's perspective. Guess which one catches more real bugs?

The Six-Week Release Cycle Isn't About Quality

Let's call it what it is: long release cycles are about organizational fear and political cover, not software quality.

When something goes wrong in production at a company with a 6-week release cycle, here's what happens:

- Product blames Engineering for not specifying requirements clearly

- Engineering blames QA for not catching the bug

- QA blames lack of time/resources/tooling

- Everyone agrees to add more process to prevent it happening again

- The release cycle becomes 7 weeks

When something goes wrong at a startup where a solo dev ships daily:

- The dev fixes it immediately because they know exactly what changed

- They add a test to prevent regression

- They ship the fix in 20 minutes

- Total downtime: 25 minutes instead of waiting for next sprint

The paradox: slower release cycles create more risk, not less. When you batch changes over weeks, identifying which change caused a production issue becomes archaeology. When you ship small changes frequently, the blast radius is tiny and root cause is obvious.

The Real Reason for Long Cycles

Six-week release cycles exist to diffuse blame. If something goes wrong, you can point to "the process" and say it was followed correctly. Nobody gets fired for following the approved process, even if the process is objectively terrible.

Daily deployments require ownership. If you shipped the code and you wrote the tests and you broke production... there's nobody else to point at. This terrifies large organizations. It delights solo developers because they can fix their own mistakes immediately.

ROI Metrics CTOs Should Actually Demand

If you're a CTO evaluating your QA organization, stop looking at vanity metrics. Here are the questions that actually matter:

1. Mean Time to Production (MTTP)

From code complete to live in production, what's the average time? If your QA process adds days or weeks to this metric, what's the business cost?

Good: Hours to 1 day

Acceptable: 2-3 days

Red flag: Weeks

2. Test Suite Execution Time

How long does it take to run your full test suite? If it's more than 15 minutes, developers stop running tests locally. If it's more than an hour, you've created a bottleneck that slows every change.

A solo dev with a well-architected test suite runs full tests in under 10 minutes. Enterprise teams with "comprehensive" test suites run overnight builds. Guess who finds bugs faster?

3. Production Incident Rate (normalized by deploy frequency)

Don't just count production bugs. Normalize by how often you deploy. A team deploying once a month with 5 incidents has a worse quality process than a team deploying daily with 10 incidents.

Calculate: (Production incidents / Number of deployments) × 100 = Incident rate percentage

Good: Under 2% (2 incidents per 100 deploys)

Acceptable: 2-5%

Red flag: Over 5%

4. QA Team Leverage

For every QA person, how many developers are shipping confidently? If you have a 6-person QA team supporting 15 developers, that's a 2.5:1 ratio. Solo devs have infinite leverage because they've automated the QA function.

Aim for: 10:1 or higher (10 devs per QA specialist)

Red flag: Under 5:1

5. Test Maintenance Burden

When you refactor code without changing behavior, how many tests break? If your test suite is tightly coupled to implementation details, you're paying a maintenance tax on every change.

Good: Fewer than 5% of tests break during refactoring

Red flag: Over 20%

What to Actually Measure

- Deployment frequency (daily? weekly? monthly?)

- Mean time to production after code complete

- Test suite execution time

- Production incident rate normalized by deploy frequency

- Developer-to-QA ratio

- Test maintenance burden percentage

Notice what's not on this list: test coverage percentage, number of test cases, or defects found in testing. Those are activity metrics, not outcome metrics.

When to Actually Hire QA Engineers

Here's the uncomfortable truth: most companies hire their first QA person too early and then scale the team too aggressively.

Don't hire QA when:

- You have fewer than 10 developers

- Your developers don't write tests (fix the culture problem first)

- You don't have CI/CD pipelines (fix the automation problem first)

- You're thinking "we need someone to manually verify features"

Do hire QA when:

- You have complex integration scenarios that developers can't reasonably model

- You need specialized performance, security, or accessibility testing expertise

- You're scaling to multiple teams and need test infrastructure specialists

- You have regulatory compliance requirements that demand independent verification

Notice what all valid reasons have in common: you're hiring specialized expertise that multiplies developer effectiveness, not gatekeepers who slow down delivery.

The Solo Developer's Unfair Advantage

Why do solo developers consistently outship enterprise teams with dedicated QA organizations? It's not because they're better at writing tests. It's because they've eliminated organizational overhead.

- No handoffs - They write code and tests in the same commit. No tickets, no waiting, no coordination.

- No politics - When a test fails, they fix it immediately. No debates about whose responsibility it is.

- No process theater - They optimize for shipping, not for looking like they're being thorough.

- No blame diffusion - They own the outcomes completely, which focuses the mind wonderfully.

The lesson isn't that companies should fire their QA teams and make everyone a solo developer. The lesson is that your testing process should feel like a solo developer's process: fast, integrated, focused on outcomes, and ruthlessly pragmatic.

How to Fix Your $200K Testing Team

If you recognize your organization in this article, here's how to course-correct:

1. Kill the Manual Testing Pipeline

If QA spends time manually clicking through test scenarios that could be automated, you're burning money. Automate it or stop testing it. There is no third option.

2. Shift Quality Left (For Real This Time)

Developers should write tests before QA sees the feature. QA's job is exploratory testing, not verifying that buttons exist. If your QA team is doing work that could be automated, that's a failure of your development process.

3. Make Test Suite Speed a P0 Priority

If your test suite takes more than 15 minutes to run, you've created a bottleneck. Invest in parallelization, better test architecture, and faster CI runners. The ROI is immediate.

4. Replace Coverage Metrics with Outcome Metrics

Stop measuring test coverage percentage. Start measuring: deployment frequency, mean time to production, and production incident rate. These tell you if quality is improving, not if you're running lots of tests.

5. Redefine QA's Role

QA should be test infrastructure engineers, not manual test executors. Their job: make it easier for developers to write good tests, build CI/CD pipelines that prevent bad code from shipping, and conduct exploratory testing for edge cases. Not "verify every feature manually before release."

// Old QA Role: Manual verification

Task: Test login feature

Steps:

1. Navigate to login page

2. Enter valid username

3. Enter valid password

4. Click login button

5. Verify dashboard loads

6. Document results in Jira

Time: 30 minutes per test run

// New QA Role: Enablement

Task: Make login testing fast and reliable

Deliverables:

1. CI pipeline that runs login tests on every PR

2. Test harness that provisions test users automatically

3. Visual regression tests for login UI

4. Performance benchmarks (login must complete < 500ms)

Time to build: 1 day

Time saved for developers: 30 min × every test run × every developer

ROI: MassiveThe Uncomfortable Reality

Here's what nobody wants to say out loud: most enterprise QA teams are solving an organizational problem (lack of developer accountability for quality) by creating a worse organizational problem (testing bottlenecks that slow delivery).

The solution isn't more QA engineers or better test management tools or higher coverage percentages. The solution is building a culture where developers own quality end-to-end, and QA provides specialized expertise that makes that ownership easier.

Solo developers don't outship enterprise teams because they're superhuman. They outship them because they've eliminated the organizational tax. The question for CTOs isn't "Should we hire more QA?" It's "How do we make our testing process feel like a solo developer's workflow?"

Fast. Automated. Focused on outcomes. No theater. No politics. Just working software shipped confidently.

Key Takeaways

- Test coverage percentage is vanity metric - It measures code execution, not user value. Focus on testing behavior, not code paths.

- Long release cycles are political theater - Six-week cycles exist to diffuse blame, not improve quality. Frequent small deployments reduce risk and increase velocity.

- QA teams optimize for job security - When processes justify headcount instead of improving outcomes, you get expensive bottlenecks. Restructure QA as enablement, not gatekeeping.

- Demand outcome metrics - Measure deployment frequency, mean time to production, incident rate, and test suite speed. Activity metrics (test cases written, defects found) are theater.

- Hire QA for expertise, not gatekeeping - QA should be test infrastructure specialists who multiply developer effectiveness, not manual verifiers who slow releases.

- Solo developers have the right model - Own quality end-to-end, automate ruthlessly, optimize for shipping. Make your enterprise process feel like this.

Ready to strengthen your test automation?

Desplega.ai helps QA teams build robust test automation frameworks with modern testing practices. Whether you're starting from scratch or improving existing pipelines, we provide the tools and expertise to catch bugs before production.

Start Your Testing TransformationRelated Posts

Hot Module Replacement: Why Your Dev Server Restarts Are Killing Your Flow State | desplega.ai

Stop losing 2-3 hours daily to dev server restarts. Master HMR configuration in Vite and Next.js to maintain flow state, preserve component state, and boost coding velocity by 80%.

The Flaky Test Tax: Why Your Engineering Team is Secretly Burning Cash | desplega.ai

Discover how flaky tests create a hidden operational tax that costs CTOs millions in wasted compute, developer time, and delayed releases. Calculate your flakiness cost today.

The QA Death Spiral: When Your Test Suite Becomes Your Product | desplega.ai

An executive guide to recognizing when quality initiatives consume engineering capacity. Learn to identify test suite bloat, balance coverage vs velocity, and implement pragmatic quality gates.