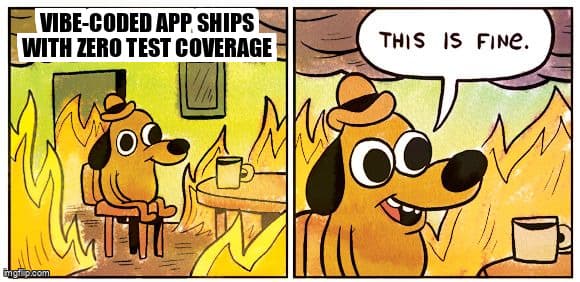

The Vibe Coder's Testing Debt: Why Your AI-Generated App Will Fail in Production (And Who Gets Fired for It)

The executives who cut the QA team to fund AI subscriptions are about to have a very bad quarter.

Picture this: It's a Tuesday. Your VP of Product has just shipped a customer-facing feature. No ticket. No PR review. No staging environment. Just a Lovable prompt, a credit card, and the unshakeable confidence of someone who has never seen a stack trace.

By Thursday, the feature is in production. By Friday, it's in your incident retrospective. By Monday, someone is asking why the QA team didn't catch it.

Welcome to 2026. Vibe coding — the practice of building software through natural language prompts to AI tools — has officially escaped the solopreneur sandbox and entered the enterprise org chart. And the blast radius is landing squarely on your test coverage metrics.

What Is Vibe Coding Testing Debt?

Vibe coding testing debt is untested AI-generated code shipped to production by non-engineers who lack the architectural context to identify what actually needs testing.

Traditional technical debt assumes someone made a deliberate tradeoff — move fast now, clean up later. Vibe coding testing debt is different. There was no deliberate tradeoff. The developer didn't skip writing tests. They didn't know tests needed to exist. The AI generated the code, the AI generated the tests, everything looked green in the demo, and the ticket got closed.

The problem isn't that the code is bad. Sometimes it's quite good. The problem is structural: the person who shipped the code cannot reason about its failure modes, because they didn't make any of the architectural decisions that create those failure modes.

Why Does AI-Generated Testing Miss What Matters?

AI-generated tests replicate the AI's own assumptions. They test what the code does — not what it should handle when reality refuses to cooperate.

This is the core trap. When you ask an AI to write a feature and then ask it to write tests for that feature, you get a closed loop. The AI optimized the implementation for the happy path. Its tests validate that happy path flawlessly. What neither the AI nor the vibe coder considered:

- What happens when the database connection drops mid-transaction?

- What does the UI do with a 503 from the payment processor?

- How does the feature behave under concurrent writes from 500 users?

- What is the timeout behavior when a third-party API takes 30 seconds?

- How does the system handle a malformed JWT in an edge browser?

These aren't exotic failure modes. These are Tuesday afternoon in production. And according to the 2025 State of Software Quality Report by Tricentis, 68% of production incidents in AI-assisted development environments are caused by untested integration boundaries — precisely the failure points AI-generated test suites consistently skip.

The Organizational Math Nobody Ran

Here is the decision many organizations made in 2024 and 2025, expressed plainly:

| Decision | Assumed Outcome | Actual Outcome |

|---|---|---|

| Cut 40% of QA headcount | AI tooling covers the gap | AI tools generate features, not coverage strategy |

| Enable non-engineers to ship features | Faster delivery velocity | Faster accumulation of untested code |

| Mandate AI test generation | Coverage metrics maintained | Coverage metrics maintained; coverage quality collapsed |

| Remove manual QA gates | Cycle time reduced by 60% | Incident rate increased by 3-4x in Q3-Q4 |

The relationship between test debt and production incidents is not linear. It's exponential. The first 20% of untested code might cause 5% of incidents. The next 20% causes 20%. Beyond that, you're not shipping software — you're rolling dice at scale.

Gartner's 2025 Application Quality survey found that organizations that reduced QA investment alongside AI tool adoption experienced a 3.2x increase in severity-1 production incidents within 12 months — compared to a 0.8x increase for organizations that maintained or increased QA investment during the same period.

Who Owns Quality When Nobody Wrote the Code Intentionally?

Quality ownership defaults to QA leadership by organizational gravity — even for code no QA engineer had visibility into before it shipped.

This is not a new problem in form, only in scale. Shadow IT existed before vibe coding. Rogue deployments existed before vibe coding. What's new is the volume and the organizational legitimacy. Vibe coding isn't the rogue PM deploying a personal side project. It's the VP of Marketing building a customer-facing integration at the request of the CEO, with official budget approval, and zero engineering review.

In that environment, "who owns quality?" is a political question as much as a technical one. The honest answer at most organizations is: whoever can't plausibly deny responsibility. And that is almost always QA.

The Plausible Deniability Org Chart

- The Vibe Coder: "I'm not an engineer, I used the AI tool the company approved."

- The Product Manager: "Engineering signed off on the tooling."

- The VP of Engineering: "It wasn't in our sprint, QA should have flagged it."

- The QA Director: "We never saw the code." (True. Also irrelevant to the incident timeline.)

- The CTO: "This is why we need better process." (The process they defunded.)

How Should QA Leadership Respond to Vibe Coding at Scale?

QA leaders should implement boundary-based quality ownership: define testable system contracts at integration points, require coverage of those contracts regardless of how the code was generated.

Fighting vibe coding is not a winning strategy. It is happening with or without QA's blessing. The question is whether QA leadership shapes the quality standards around it, or inherits the consequences of an absence of standards.

Here is a pragmatic framework for QA leaders navigating organizations where vibe coding is now official strategy:

Phase 1: Define the Perimeter (Week 1-2)

- Audit all vibe-coded code in production. You cannot protect a perimeter you cannot see. Map every AI-generated feature that shipped without QA review in the last 6 months.

- Identify integration boundaries. List every external system the vibe-coded code touches: databases, payment processors, third-party APIs, authentication services, file storage.

- Score risk. Assign each integration a risk score based on data sensitivity, transaction volume, and failure visibility to end users.

Phase 2: Establish Quality Gates (Week 3-4)

- Define contract tests for all high-risk integrations. These are owned by QA, not by whoever generated the feature. They test the contract, not the implementation.

- Implement smoke tests for the top 5 user flows. These run on every deploy, regardless of who shipped the code. Failing smoke tests block production.

- Require rollback plans before production approval. Vibe coders cannot debug production incidents. If you can't roll back instantly, you cannot ship.

Phase 3: Systematize Coverage (Month 2+)

- Create a "QA Handoff Checklist" for AI-generated features. Before any vibe-coded feature can request production access, the vibe coder must complete this checklist with QA guidance.

- Run exploratory testing sessions on new vibe-coded modules. Assign a QA engineer 2-4 hours per feature to test failure modes the AI didn't consider.

- Track incident-to-source attribution. Every production incident should be tagged with how the responsible code was generated. This data becomes your business case for sustained QA investment.

The Metric That Will Save Your Job

There is one number QA leaders in vibe coding orgs must report to the C-suite, and it is not code coverage percentage. It is incident cost attribution.

Every production incident has a cost: engineer hours to diagnose, engineer hours to fix, customer support volume, potential SLA penalties, and reputational damage. When you can show that incidents traced to untested AI-generated code cost 4x more than incidents in engineer-reviewed, QA-tested code — and you have the data to prove it — the conversation about QA headcount changes.

According to the Consortium for Information and Software Quality (CISQ), poor software quality cost US organizations an estimated $2.41 trillion in 2022. As vibe coding scales through 2025 and 2026, that figure is tracking significantly higher — with untested AI-generated code identified as a primary contributor in their preliminary 2025 analysis.

// Incident Cost Attribution Template

// Track this for every P1/P2 incident

interface IncidentRecord {

incidentId: string;

codeSource: 'engineer-written' | 'ai-assisted' | 'vibe-coded' | 'unknown';

qaReviewed: boolean;

testCoverageAtShip: number; // percentage

costs: {

engineerHoursToDetect: number;

engineerHoursToFix: number;

customerTicketsGenerated: number;

estimatedRevenueLoss: number;

slaPenalties: number;

};

// Calculated field

totalCost(): number;

}

// After 90 days of data, compare:

// avgCost(codeSource='vibe-coded', qaReviewed=false)

// vs

// avgCost(codeSource='engineer-written', qaReviewed=true)

//

// In most orgs, this ratio is between 3x and 8x.

// That ratio is your budget justification.What Executives Need to Hear (But Won't Like)

The decision to fund AI tooling and cut QA headcount is not irrational. The assumptions behind it are just incorrect, and those assumptions are about to be corrected by production.

The core assumption: AI tools generate quality-assured output. The reality: AI tools generate plausible output. Plausible is not the same as correct, and correct is not the same as production-safe.

A more accurate mental model: vibe coding is a first draft generator at extraordinary speed. First drafts require editing. Shipped code requires testing. Speed of generation does not reduce the need for validation — it increases the volume of material requiring validation.

The Analogy That Lands in Boardrooms

Imagine hiring 10 junior developers who each ship 5x the code of a senior engineer. Now imagine none of them have their code reviewed. Your velocity metric looks extraordinary. Your production stability looks like a war zone. Vibe coding is the same dynamic — except the junior developer is a language model that cannot attend the incident retrospective.

Key Takeaways

- Vibe coding creates a structural testing blindspot. Developers who didn't make architectural decisions cannot reason about what to test. This is not a skill gap — it's a knowledge gap that cannot be closed by AI tooling alone.

- AI-generated tests validate the happy path the AI already optimized for. They are not a substitute for adversarial, boundary, and integration testing performed by humans who understand failure modes.

- Test debt compounds non-linearly. Organizations that cut QA investment alongside AI adoption see production incident rates increase 3x or more within 12 months — not gradually, but sharply when the first major load event hits.

- QA leadership must shift from code reviewer to quality architect. You cannot review every line of vibe-coded code. You can define the contracts, gates, and metrics that make quality visible regardless of who generated the code.

- Incident cost attribution is your most powerful leadership tool. Data that shows untested AI-generated code costs 4-8x more per incident than reviewed, tested code converts the QA investment conversation from philosophical to financial.

Ready to strengthen your test automation?

Desplega.ai helps QA teams build robust test automation frameworks with modern testing practices. Whether you're starting from scratch or improving existing pipelines, we provide the tools and expertise to catch bugs before production.

Start Your Testing TransformationFrequently Asked Questions

What is vibe coding testing debt?

Vibe coding testing debt is the accumulation of untested AI-generated code shipped to production by non-engineers who lack the architectural knowledge to identify what needs testing.

Why do AI-generated tests fail to catch production bugs?

AI-generated tests mirror the AI's own happy-path assumptions. They test what the code does, not what it should handle — leaving edge cases, race conditions, and failure modes completely uncovered.

How quickly does vibe coding test debt cause production incidents?

Most organizations see their first significant production incident within 60-90 days of adopting vibe coding at scale, typically triggered by the first real-world load spike or unexpected user behavior.

Who owns quality when developers didn't write the code intentionally?

Ownership defaults to QA leadership by organizational gravity. Without explicit policy, QA teams inherit triage responsibility for code whose architectural decisions they had no visibility into.

What is the minimum viable test strategy for vibe-coded applications?

Contract testing at API boundaries, smoke tests covering the 5 most common user flows, and a rollback-ready deployment strategy. Expand coverage iteratively as the codebase stabilizes.

Related Posts

Hot Module Replacement: Why Your Dev Server Restarts Are Killing Your Flow State | desplega.ai

Stop losing 2-3 hours daily to dev server restarts. Master HMR configuration in Vite and Next.js to maintain flow state, preserve component state, and boost coding velocity by 80%.

The Flaky Test Tax: Why Your Engineering Team is Secretly Burning Cash | desplega.ai

Discover how flaky tests create a hidden operational tax that costs CTOs millions in wasted compute, developer time, and delayed releases. Calculate your flakiness cost today.

The QA Death Spiral: When Your Test Suite Becomes Your Product | desplega.ai

An executive guide to recognizing when quality initiatives consume engineering capacity. Learn to identify test suite bloat, balance coverage vs velocity, and implement pragmatic quality gates.